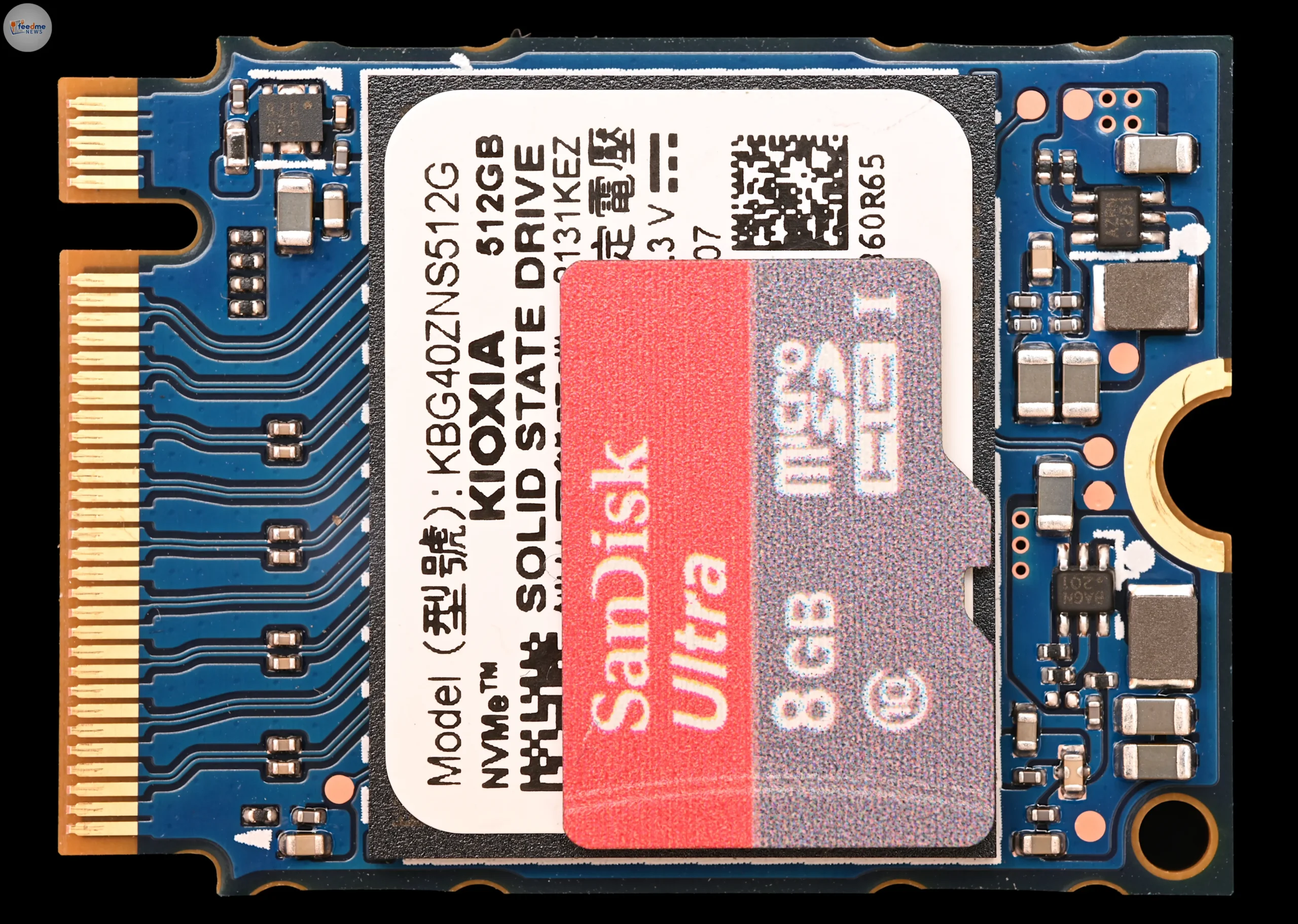

Kioxia has unveiled a new solid-state drive, the GP Series, that uses Storage Class Memory to push data to GPUs at a pace aimed at millions of IOPS. The company positions the device as a way to expand the memory that GPUs can access directly, easing one of the main pain points in today’s AI infrastructure: keeping high-bandwidth GPU memory fed without stalls. If the GP Series delivers on its goal, it could shift how data centers design systems for training and running large AI models, where both speed and predictability matter. Full technical details remain limited in public materials, but the direction is clear. Memory and storage are merging closer to the GPU, and Kioxia wants to sit at that junction.

The launch came on Sunday, March 22, 2026, as reported by TechRadar Pro. Kioxia framed the GP Series for high-performance AI workloads. The company did not share an event location in the initial reporting, and broader specifications were not disclosed.

Why GPU memory has become the AI system bottleneck

Modern AI workloads demand that GPUs process vast amounts of data with tight timing. GPUs use high-bandwidth memory (HBM) for raw speed, but HBM is scarce and expensive. Teams often face a trade-off: either fit models and data in limited HBM or shuttle them in and out from slower tiers. That movement can stall compute. When thousands of GPU cores wait for data, performance and efficiency drop fast.

Bringing a lower-latency, higher-IOPS storage tier closer to the GPU aims to bridge this gap. Rather than relying only on system RAM or distant storage, an intermediate layer can serve batches, embeddings, and features at high rates. Inference pipelines with many small reads can benefit from high IOPS, while training jobs need steady throughput and predictable latency. The GP Series, built on Storage Class Memory, targets this middle ground.

What Storage Class Memory brings to the stack

Storage Class Memory (SCM) describes memory-like storage that sits between fast but volatile DRAM and slower but dense NAND flash. Historically, SCM products have offered lower latency than typical SSDs and persistence that DRAM lacks. The class includes various technologies and designs; the goal is the same: make storage behave more like memory for critical workloads.

For data centers, SCM can reduce the number of hops between compute and data. It also can simplify caching and tiering strategies by making a larger, faster working set available to accelerators. That matters for AI, where input pipelines, vector databases, and feature stores strain traditional SSDs. While the GP Series’ exact SCM technology has not been detailed in initial public reports, Kioxia’s move signals growing interest in memory-tier innovation after years focused mostly on GPU chips.

Millions of IOPS: a headline metric with real-world caveats

Kioxia’s positioning around “millions of IOPS” fits the needs of latency-sensitive AI tasks. But IOPS alone does not define performance. Workloads vary. Small, random reads stress metadata and queue depth. Mixed read-write patterns tax endurance and controller logic. Large-batch training runs need sustained throughput and tight tail latency, not just high peak operations.

Teams will look for independent measurements across read sizes, queue depths, and mixed workloads, along with tail-latency data. They will also want to know how well the GP Series integrates with GPU-direct pathways that cut the CPU out of the data path. Without that, some IOPS gains can vanish under software overhead. Early messaging suggests Kioxia has the right target, but real impact will depend on end-to-end behavior in production stacks, not a single top-line number.

Feeding HBM without drowning the CPU

Many AI clusters still route storage traffic through the CPU and system memory, creating congestion and latency spikes. The industry has worked to enable direct data paths from storage to GPUs to avoid those chokepoints. The GP Series pitches itself as a device that can expand “GPU-accessible” memory. That phrase signals a design that reduces handoffs and cuts round trips between the accelerator and storage.

In practice, success here depends on ecosystem support. Kernel drivers, DMA engines, and framework integrations must handle parallel queues and large working sets. Schedulers need to prefetch data and coordinate multiple GPUs without duplication. If Kioxia’s hardware works with these software paths cleanly, it can reduce idle cycles on accelerators and improve total cost of ownership by making each GPU minute count.

Data protection and governance near the accelerator

Speed alone does not win in enterprise AI. Data protection, access control, and audit trails matter just as much. When storage sits close to GPUs and serves many small, high-speed reads, the risk of exposure from misconfiguration rises. Teams need encryption at rest, strong key management, and clear isolation between tenants or jobs, especially in shared clusters.

Regulatory duties compound this. Sensitive data can move through inference pipelines at high speed. Logs, retention, and residency rules still apply. Even if the GP Series focuses on raw performance, buyers will look for standard controls: secure erase options, integration with existing key managers, and support for forensic logging. None of these features were detailed in the initial reporting, so potential adopters should ask for specifics before deploying at scale.

The missing pieces: specs, software, and cost

Kioxia’s early positioning leaves unanswered questions that buyers will press. They will want to know capacity ranges, endurance ratings, form factors, power draw, and interface details. They will also ask about sustained performance under mixed workloads, temperature behavior, and availability timelines. Without these, it’s hard to plan rack power budgets or decide which nodes in a cluster should host the new drives.

Software support will be pivotal. Operators need drivers and libraries that work cleanly with mainstream Linux distributions and popular AI frameworks. They also need guidance on data placement policies: what sits in HBM, what lives on SCM, and when to spill over to standard SSDs or network storage. These tuning decisions can erase gains if they add overhead. Early documentation and reference architectures will help teams get value faster, reduce missteps, and build trust.

What this means for AI infrastructure planning

Kioxia’s GP Series arrives as model sizes and datasets grow faster than HBM capacity. The industry has chased bigger accelerators, but feeding them is now the hard part. A performant SCM tier that GPUs can access more directly offers a practical way to stretch scarce HBM and keep compute busy. If it integrates well, it can also let teams right-size nodes and avoid overprovisioning expensive memory.

Even so, operations teams will watch for reliability, lifecycle support, and procurement certainty. Production clusters favor parts with consistent supply, clear warranties, and long-term firmware maintenance. They also weigh sustainability: faster storage that lets users finish jobs sooner can lower energy per task, but only if the device’s efficiency and duty cycles support that claim.

Kioxia’s GP Series SSD, built on Storage Class Memory and aimed at feeding GPUs millions of IOPS, highlights where AI infrastructure is heading: bringing memory-like storage closer to accelerators to cut idle time and raise efficiency. The announcement on March 22 signals intent, but many practical details remain out of view. Buyers will look for hard numbers, ecosystem readiness, and independent benchmarks before they change designs. If Kioxia can show consistent low latency, clean software paths, and sensible TCO, the GP Series could shape how data centers tier memory for AI. If not, it still underscores a broader shift. In the AI era, system design will favor tighter coupling between compute and data—where every microsecond matters and storage starts to act like memory.